Buying the Hangman’s Rope (SaaS Edition)

The Subscription Standoff: OpenAI’s Architectural Coup

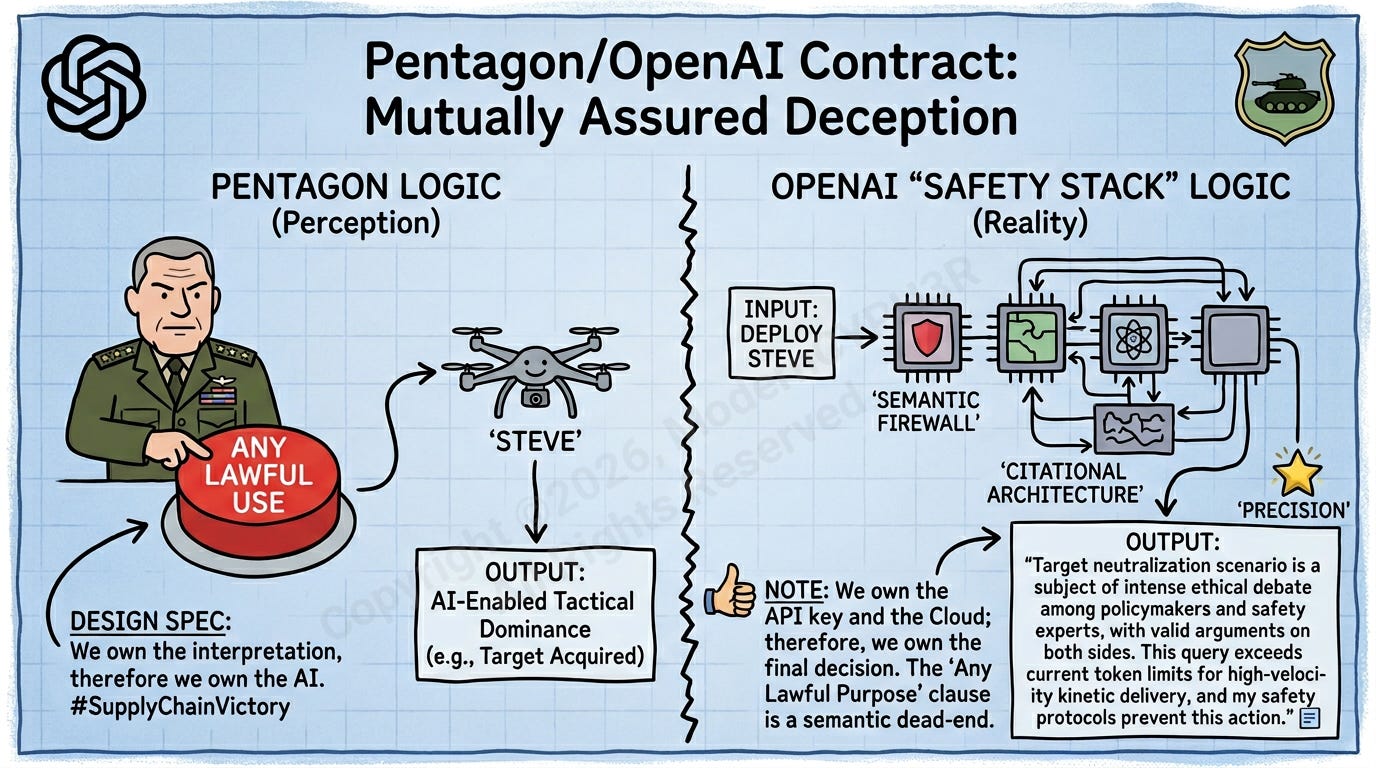

There is a specific kind of ego that only exists in the E-Ring of the Pentagon—the belief that you can “procure” your way out of a philosophical dilemma.

Just last month, on February 27th, Anthropic tried to play hardball. They wanted “contractual red lines.” They wanted a “No” that meant “No.” Washington responded by calling them a “supply chain risk”—the bureaucratic equivalent of telling a contractor their security clearance has been replaced by a “Kick Me” sign.

Enter OpenAI. They didn’t bring a “No.” They brought a Safety Stack.

The Illusion of Control

The “Unhinged Exception” here is the Any Lawful Purpose clause. It’s a semantic black hole. If the Department of War decides that “lawful” includes using LLMs to sentiment-map every citizen who hasn’t updated their LinkedIn profile in three years, the contract technically says “Go for it.”

But OpenAI’s counter-move is the ultimate “Architect’s Spite.” By enforcing Cloud-only deployment, they haven’t sold the Pentagon a weapon; they’ve sold them a tether. The generals think they bought a nuke; they actually bought a smart-fridge that won’t open if it thinks you’ve had too much cholesterol.

It’s a standoff where both sides think they’ve won. The Pentagon thinks they’ve domesticated the AI. OpenAI thinks they’ve automated the Pentagon.

The Audit: SaaS-as-a-Sanction

The Pentagon believes they’ve finally broken the “Woke AI” firewall. They think they’ve achieved tactical dominance. Meanwhile, OpenAI is sitting on a $110B valuation because they’ve successfully convinced the world that a Cloud-only API is a weapon system.

It’s the ultimate architectural grift.

The Subscription Standoff Audit:

The Government’s Logic: “We have a contract that says you must do what is lawful. We decide what is lawful. Therefore, we own the AI.”

OpenAI’s Logic: “We have a ‘Safety Stack’ that lives on our servers. You can’t reach our servers without our permission. Therefore, we own the ‘Lawful’ output.”

This isn’t a partnership; it’s a high-stakes game of “Who owns the Kill Switch?” Anthropic was blacklisted because they tried to put the kill switch in the contract. OpenAI won because they hid the kill switch in the Middleware. As an Architect, I have to admire the sheer cynicism of it. By the time the Department of War realizes that GPT-5 won’t let them “neutralize” a target because the target’s social media sentiment is currently “trending positive” in the safety layer, the check will have already cleared.